Making Sure Risks are ALARP

Andy Brazier and Nick Wise explain why it’s so important to consider real-world situations in risk assessment

IN THE previous two articles1,2 we have highlighted that the various safety study methods available are the tools in your toolkit, and you should expect to use them wisely to allow you to decide whether risks are as low as reasonably practicable (ALARP). This final article in the series illustrates how even the fundamental safety principles that we are all familiar with (including inherent safety, hierarchy of risk controls, continuous improvement and being cautious) have to be applied sensibly when deciding whether overall risks are ALARP; and why it is so important to consider real-world situations.

Inherent safety

We have, as an industry, been aware of inherent safety since the 1970s, thanks to a great extent to the late Trevor Kletz. Although he says that he did not develop the original idea, it is very clear that he did a great deal to advance and promote it to the point today where it is widely accepted as a fundamental safety concept3.

When we look back at the intervening decades, there has been an expansion in the use of engineering risks controls, particularly safety instrumented systems (SIS). This appears to be at odds with the principles of inherent safety, which Kletz summarised with the following concepts4:

- “What you don’t have, can’t leak.”

- “People who are not there can’t be killed.”

- “The more complicated a system becomes, the more opportunities there are for equipment failure and human error.”

The best time to consider inherent safety is very early in a new project (typically, the concept phase). This may give you the impression that after this stage, and particularly once a system is operational, the opportunity has been missed. There may be fewer options available but it should still be considered as part of safety studies performed later in projects, when planning modifications, revalidation of safety reports/cases and when identifying actions following an incident investigation3. Also, the principles can be applied for routine work, for example by reducing stored inventories and when preparing plant for maintenance.

Inherent safety can often be viewed as a binary outcome - either fully achieved or not at all. Certain hazards may be integral to your process, so there is no viable option to eliminate them, and you may assume that inherent safety does not apply unless you are prepared to completely change business. This is not the case.

Certain hazards may be integral to your process, so there is no viable option to eliminate them, and you may assume that inherent safety does not apply unless you are prepared to completely change business. This is not the case

Understanding the ‘gap’ between current arrangements and the inherently safe solution can be particularly useful, especially when deciding what additional risk controls should be in place. If the gap is large, there will be a high reliance on add-on risk controls. In many cases, the gap may be quite small or only exist for certain modes of operation (eg plant startup).

Whilst you should be looking for opportunities to apply inherent safety at all times, eliminating or reducing your immediate risks may only mean that they are transferred elsewhere. For example, choosing to not make a product, to eliminate a hazard, may simply mean that production is moved to another site, possibly in another country3. The alternative may apply lower safety standards. Also, risks from transport will have increased. This does raise some complex societal risk conundrums, which you may think are not your responsibility. You may be able to argue that continuing local manufacturing is the inherently safer option because you have better control of the risks. This highlights why inherent safety cannot be seen as a simple, binary decision.

Hierarchy of risk control

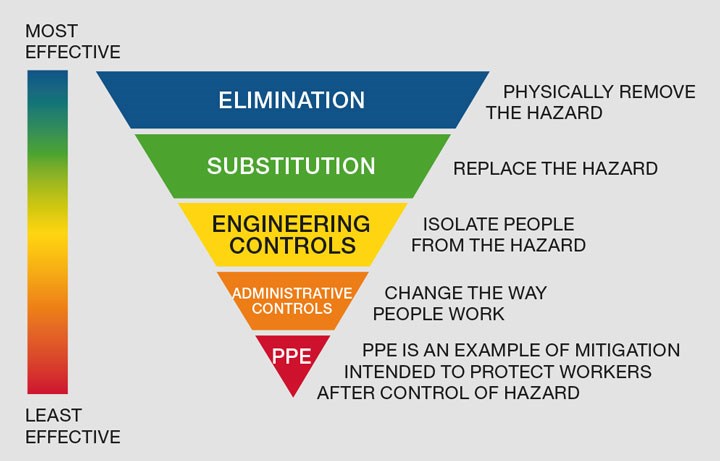

The hierarchy of risk control (see Figure 1) is another fundamental safety concept. Controls at the top of the hierarchy are generally more reliable. Controls at the bottom of the hierarchy are generally easier to implement once a system has been designed or is in operation, but are less effective.

There are clear overlaps at the top of the hierarchy (elimination and substitution) with inherent safety. This is a useful reminder that you have multiple approaches when evaluating risks and making clear-cut distinctions between them is neither necessary nor helpful3. One important difference is that inherent safety can reduce risk by reducing potential consequences and likelihood. Other controls are mostly concerned with reducing likelihood.

The principles behind the hierarchy of risk control are very sound, but may give you the impression that you only need to consider administrative controls and mitigation if you cannot implement effective engineered controls. In reality, all engineered controls have their limitations. You should be considering risk controls from all parts of the hierarchy when deciding if risks are ALARP.

One of the arguments for discounting operational controls and mitigation is that they are vulnerable to human error. Hardware controls appear to be more reliable, but can only be relied on for the situations they are designed for. Human performance can vary dramatically and so people can appear to be less reliable. However, this is also a benefit because it allows people to deal with the variability of the real world, including non-routine, unplanned and unexpected situations.

Continuous improvement

The Plan-Do-Check-Act (PDCA) cycle is standard in many management systems. It can imply that you should always be doing something to improve safety. This may encourage you to keep adding more risk controls, especially if your organisation has not defined a level of risk that is considered to be broadly acceptable.

It is difficult to argue with the underlying principle of continuous improvement but it has limits. If effective controls are already in place, the risk reduction achieved by adding more will be negligible, and knock-on effects and unintended consequences may actually result in increased overall risk.

As an example, the introduction of digital control systems allowed more alarms to be added at minimal cost. The result was that operators became overloaded with nuisance alarms, which detracted them from proactively operating the system and identifying and dealing with problems early.

The answer has to be to have the right controls in place, recognising their strengths and weaknesses. You should continuously review your risks to confirm they are still ALARP, but do not feel pressurised into adding or changing controls just to prove you are applying continuous improvement.

Being cautious in assessments

No one wants to be considered reckless where safety is a concern. Surely it is much better to be cautious and have a system that is safer than it needs to be? This may lead you to take the worst cases for both consequence and likelihood when using a risk assessment matrix, inputting a greater hazardous event frequency when performing LOPA or taking a pessimistic view of the reliability of every risk control measure applied. However, this can force you into adding controls that are not really required in order to satisfy perceived risk targets.

Caution can result in risk aversion where the objective becomes the avoidance of all known risks

Caution can result in risk aversion where the objective becomes the avoidance of all known risks (see Figure 2). This can have immediate and significant impacts on business because the cost of safety will quickly spiral. However, risk aversion can lead to poor management of risks in practice. People may feel compelled to circumvent systems to get the job done or they lose the ability to deal with risks effectively.

There is no harm in taking a cautious approach as long as you acknowledge and record it as such, and the outcome is a sensible set of risk controls. However, be prepared to challenge additional controls resulting from overly-cautious assessments if these appear unreasonable based on your engineering judgement and experience.

Recent Editions

Catch up on the latest news, views and jobs from The Chemical Engineer. Below are the four latest issues. View a wider selection of the archive from within the Magazine section of this site.